A world of color and texture could soon become more accessible to people who are blind or have low vision, via new software that narrates what a camera records.

The tool, called WorldScribe, was designed by University of Michigan researchers and will be presented at the 2024 ACM Symposium on User Interface Software and Technology in Pittsburgh.

The study is titled “WorldScribe: Towards Context-Aware Live Visual Descriptions” and appears on the arXiv preprint server.

The tool uses generative AI (GenAI) language models to interpret the camera images and produce text and audio descriptions in real time to help users become aware of their surroundings more quickly. It can adjust the level of detail based on the user’s commands or the length of time that an object is in the camera frame, and the volume automatically adapts to noisy environments like crowded rooms, busy streets and loud music.

The tool will be demonstrated at 6:00 pm EST Oct, 14, and a study of the tool—which organizers have identified as one of the best at the conference—will be presented at 3:15 pm EST Oct. 16.

“For us blind people, this could really revolutionize the ways in which we work with the world in everyday life,” said Sam Rau, who was born blind and participated in the WorldScribe trial study.

“I don’t have any concept of sight, but when I tried the tool, I got a picture of the real world, and I got excited by all the color and texture that I wouldn’t have any access to otherwise,” Rau said.

“As a blind person, we’re sort of filling in the picture of what’s going on around us piece by piece, and it can take a lot of mental effort to create a bigger picture. But this tool can help us have the information right away, and in my opinion, helps us to just focus on being human rather than figuring out what’s going on. I don’t know if I can even impart in words what a huge miracle that truly is for us.”

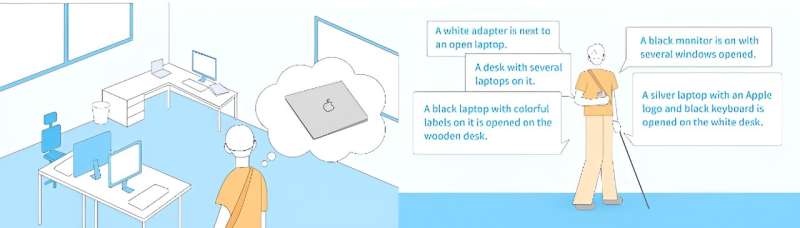

During the trial study, Rau donned a headset equipped with a smartphone and walked around the research lab. The phone camera wirelessly transferred the images to a server, which almost instantly generated text and audio descriptions of objects in the camera frame: a laptop on a desk, a pile of papers, a TV and paintings mounted on the wall nearby.

The descriptions constantly changed to match whatever was in view of the camera, prioritizing objects that were closest to Rau. A brief glance at a desk produced a simple one-word description, but a longer inspection yielded information about the folders and papers arranged on top.

The tool can adjust the level of detail in its descriptions by switching between three different AI language models. The YOLO World model quickly generates very simple descriptions of objects that briefly appear in the camera frame. Detailed descriptions of objects that remain in the frame for a longer period of time are handled by GPT-4, the model behind ChatGPT. Another model, Moondream, provides an intermediate level of detail.

“Many of the existing assistive technologies that leverage AI focus on specific tasks or require some sort of turn-by-turn interaction. For example, you take a picture, then get some result,” said Anhong Guo, an assistant professor of computer science and engineering and a corresponding author of the study.

“Providing rich and detailed descriptions for a live experience is a grand challenge for accessibility tools,” Guo said. “We saw an opportunity to use the increasingly capable AI models to create automated and adaptive descriptions in real-time.”

Because it relies on GenAI, WorldScribe can also respond to user-provided tasks or queries, such as prioritizing descriptions of any objects that the user asked the tool to find. Some study participants noted that the tool had trouble detecting certain objects, such as an eyedropper bottle, however.

Rau says the tool is still a bit clunky for everyday use in its current state, but says he would use it everyday if it could be integrated into smart glasses or another wearable device.

The researchers have applied for patent protection with the assistance of U-M Innovation Partnerships and are seeking partners to help refine the technology and bring it to market.

Guo is also an assistant professor of information within U-M’s School of Information.

More information:

Ruei-Che Chang et al, WorldScribe: Towards Context-Aware Live Visual Descriptions, arXiv (2024). DOI: 10.1145/3654777.3676375

Citation:

AI-powered software narrates surroundings for visually impaired in real time (2024, October 10)

retrieved 10 October 2024

from https://techxplore.com/news/2024-10-ai-powered-software-narrates-visually.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.